The Future of Focus

What the best operators do differently at the AI frontier.

Every week, a new AI tool promises to change how you work. Most won’t.

We set out to understand which tools actually stick and why. So we went straight to the source, 30+ founders, executives, and investors at the leading edge of AI.

We expected to find smarter systems, better prompts, and a few useful tools. What we found was something else entirely.

A small group of operators has pulled so far ahead that their workflows bear almost no resemblance to those inside even the most established companies. They’re not using better tools, they’ve reorganized how they work entirely.

This report is a map of what the front line now looks like. Four discoveries from the leaders who have crossed to the other side, and the principles that separate them from everyone else.

Interviews included individuals from:

The Frontier Has Split

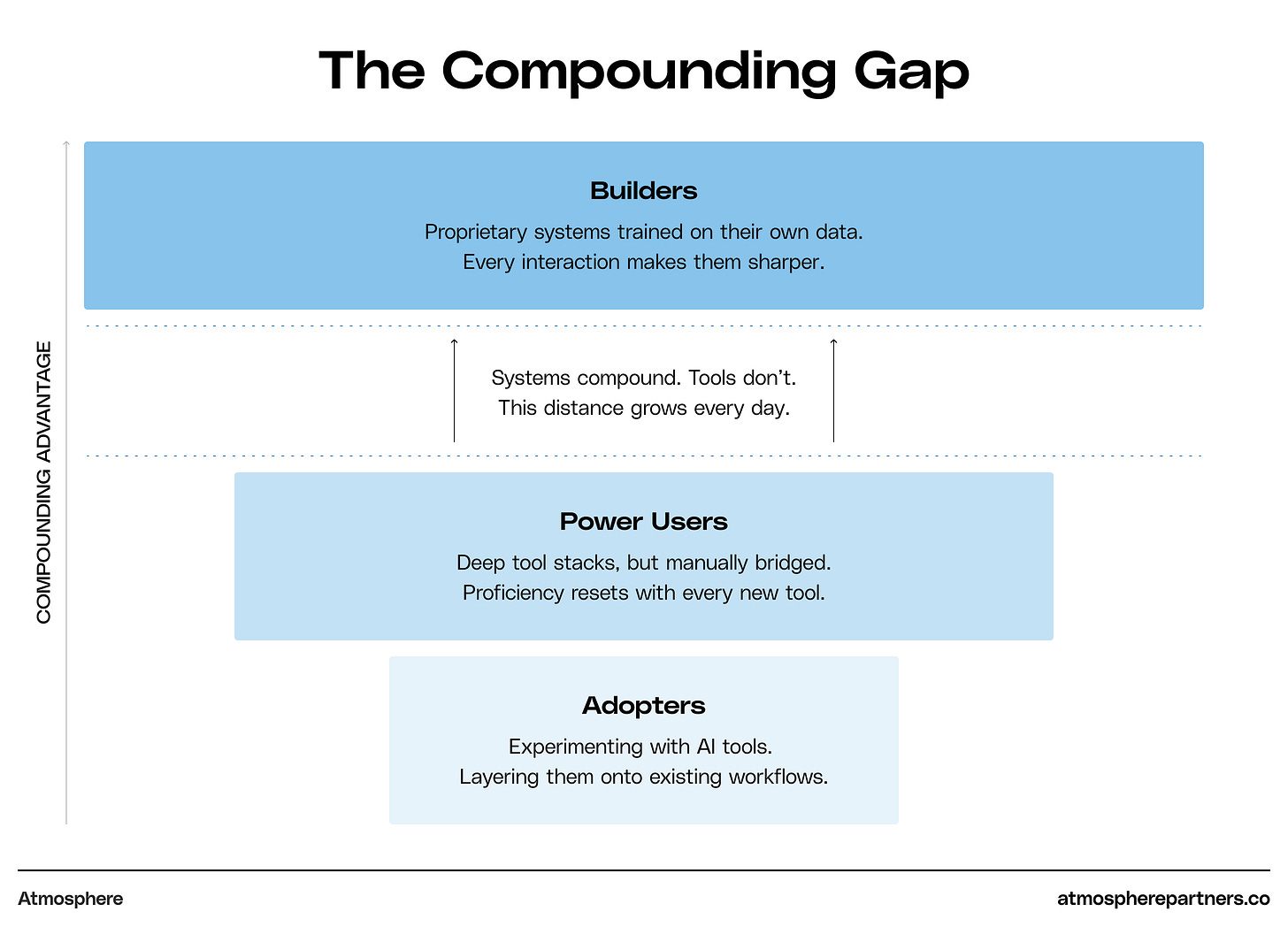

Across our interviews, operators fell into three distinct groups.

At the top: builders, operators who have constructed proprietary AI systems trained on their own data, customers, and workflows. Every interaction makes these systems harder to replicate.

Below them: power users, sophisticated operators running deep tool stacks but still manually bridging the gaps between them.

And then: adopters, experimenting with AI tools, but layering them onto existing ways of working. Inside some of the most prominent companies we spoke with, there is no unified stack, no shared best practices. Individual employees are often paying for their own subscriptions.

The distance between these groups is growing because the builders’ systems compound while everyone else restarts from zero with every new tool.

Across all three tiers, one pattern held: AI-native tools are largely companion tools. They augment existing workflows rather than replace them.

So which companions earn a permanent place?

The Operator Stack

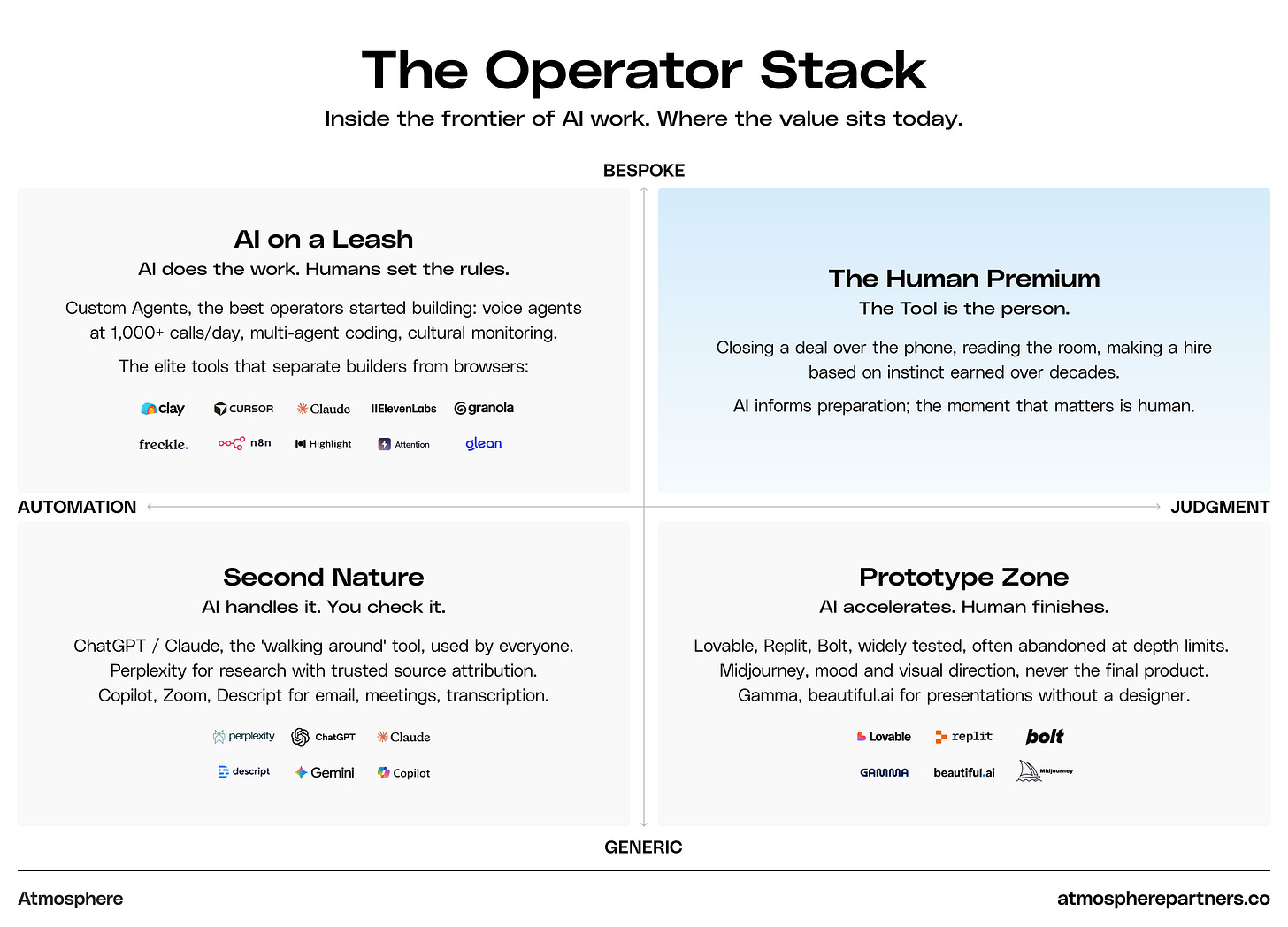

We asked operators at the leading edge of AI a simple question: what tools do you actually use?

Across interviews, the same structure emerged.

This list reflects the preferences of the operators we interviewed, not a comprehensive market map.

Every operator we spoke to had strong opinions about tools. But the deeper we pushed, the conversation shifted, from what they use to how they act.

Today’s advantage isn’t artificial. It’s knowing where AI ends, and you begin.

AI has given everyone the tools to produce good work. But when good becomes free, great becomes more valuable. We found the difference between the two isn’t more AI, it’s knowing when to put the human back into the machine.

We call this The Imprint.

The moment where judgment overrides generation. Where the human hand re-enters the work and makes it unmistakably yours.

Every operator, team, and company has an Imprint. The question is where yours sits. Step in too early and you lose the leverage AI provides. Step in too late and the work becomes generic.

The people pulling ahead aren’t defined by their AI stack. They win by having the strongest sense of where their Imprint is.

Finding it isn’t intuitive, but across more than thirty interviews, four discoveries surfaced that reveal how the best operators have found theirs.

01

The Last 10% Is the Only 10% That Matters

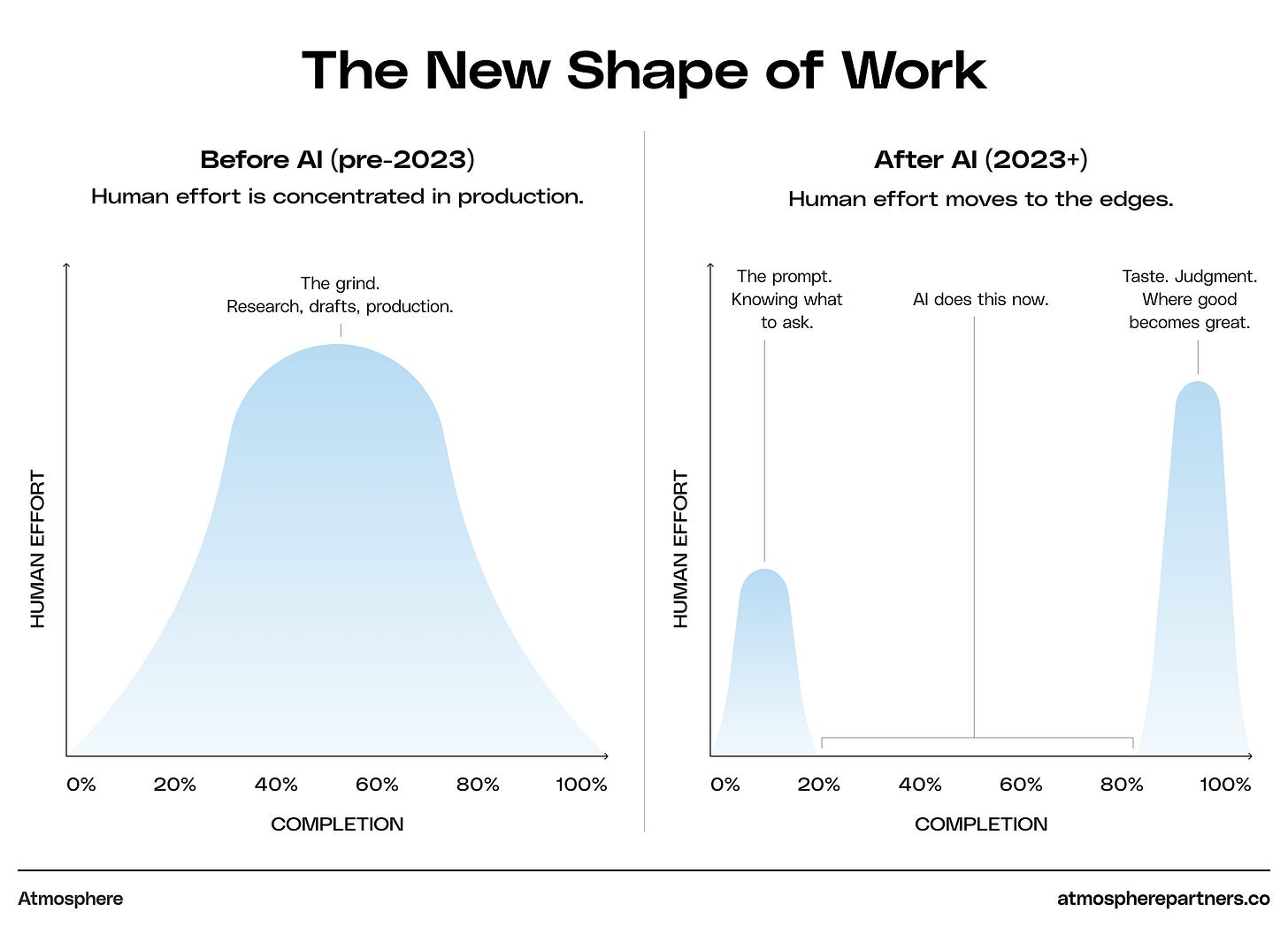

AI compresses production, but expands judgment. The last 10% isn’t just the finishing touch. It’s becoming the most valuable work in the economy.

There’s a phrase in engineering called the ‘march of the nines’. It takes the same effort to go from 9% to 90% as it does to go from 90% to 99%. The closer you get to completion, the harder each improvement becomes.

AI has shifted work in a similar way. Until recently, the grind from blank page to draft was where most of the work lived. AI has collapsed that middle. A deck that took two weeks now takes two hours. Research that consumed an afternoon now takes minutes.

What AI hasn’t changed is the effort required to turn good work into great work. That effort lives at the end.

A creative agency founder, working with leading AI companies, uses Midjourney and ElevenLabs to get concepts in front of clients three times faster than the old model of scripts, stock imagery, and decks. But they won’t use AI for the finished work. “It doesn’t allow enough room for the human.” AI is “essentially a sketching tool, it gives us direction to where we’re going.” The final output? “The last 10% is always done in real life, with a human touch.”

An engineering lead at a major cloud platform described building infrastructure tools that reach 90% accuracy, then hitting a wall. “If you can’t trust it 100%, you’re not gonna give it full control. That last 10% is the hardest to bridge.”

But here’s what separated the builders from everyone else. They’re not guessing when to step in, they’ve engineered it. Confidence thresholds at every stage, back-testing on every output, and human override built into the system by design. The Imprint isn’t instinct for these operators; it’s infrastructure.

The CTO of a Series A company, whose entire product is generative AI, framed it most directly: “When execution becomes simple, judgment becomes essential.”

AI has made the first mile nearly free, but the last mile just got longer, and now it’s the only mile that differentiates you. One of the most advanced builders we interviewed put it plainly: “Are we going to crank it all out faster? Or are we going to keep the time we have and raise the quality instead?” That choice — speed or craft — is quietly becoming a dividing line.

The Human Signal

AI is very good at producing the right answer. But people rarely respond to the right answer. They respond to the human one, rougher at the edges, more raw, more emotional, harder to replicate. The part that can only come from the last 10%.

The evidence is everywhere. Vinyl hitting a billion dollars in U.S. revenue last year, driven by Gen Z. Film photography sales tripling in five years. Rosalía singing German opera at Berghain. Even the recent demise of the metaverse. In a frictionless world, friction becomes the unmistakable signal that a human was here.

Rosalia, a Spanish popstar, singing German Opera = Friction as a signal.

The same reflex showed up in our interviews. “AI waters down ideas.” “People are craving human touch.” “It breaks at the final layer.” One more round of prompt roulette rarely makes the output better. It makes it blander. That’s motion masquerading as progress.

The conventional explanation is that AI lacks taste. AI has taste, but its default taste is bad. The chatbot’s voice is earnest. The image generator’s output is emotionally vacant. People clock it immediately. This matters because when someone senses AI in the output, their trust decreases. Not because the information is wrong, but because the presence of a machine implies the absence of care. The top operators know this and treat AI’s default taste the way a director treats a first rehearsal - raw material, not the performance.

The last 10% isn’t just where the human adds value, it’s where the human removes the residue of the machine. The time AI frees up is being reinvested here.

AI closed the gap between a blank page and a competent draft. It did not close the gap between good and great.

02

The Discipline of Less

Your edge doesn’t come from what you add, it comes from what you remove. The best operators are using constraint as a forcing function.

Norway is the most decorated Winter Olympic nation in history, despite having 60x fewer people than the USA. What explains this? The philosophy is counterintuitive. Athletes spend the majority of their time at low effort, only pushing when it counts. They don’t chase new methods or fads. They only add something when they’re certain it improves the system.

The Norwegian Method in action: Klæbo, 6x golds in ‘26, most in Winter Olympic history.

The same pattern showed up in our interviews. In a market flooded with new tools, the most disciplined operators rejected most of them. They don’t reach for new, they reach for useful.

Constraint as Strategy

An AI-native founder described his philosophy as “delete and eliminate as many unnecessary things as possible.” He’s deliberately focused on constraints to force sharper thinking. His company runs what would traditionally take over a hundred people with fewer than thirty. Not because he couldn’t hire, because he chose not to. This deliberate friction didn’t slow him down; it made him innovate.

A founder building enterprise AI tools took the same approach, “to use the least amount of tools possible,” so that everything the company learns compounds in one place. Notion for documentation, Linear for product, Cursor for code, Claude Projects for everything from sales coaching to recruiting. Fragmentation kills the knowledge that makes a company smarter over time.

They chose Claude over ChatGPT because of editorial sensibility. They chose Granola because it disappears into the workflow. The selection criteria wasn’t “what can this do?” It was “does this earn a place in my day?”

This is what separates builders from adopters. Adopters chase the new tool. Builders go deeper on the system they already have.

The best operators are treating tool selection the way the top investors treat their portfolio: conviction about what to hold, discipline about what to cut.

03

Control is the Currency

Why is it that in every major technology wave, the early leaders rarely win?

From PCs to the internet to mobile, the pattern repeats. Google didn’t win the web by building websites. It won by controlling discovery. Apple didn’t win mobile by inventing the smartphone. It won by controlling the ecosystem. The technology commoditizes. The tools converge. And value shifts to the systems built on top, to the people who control how the technology is used.

The same shift is now underway in AI. The main LLMs can do roughly the same things at the same level. The tools built on top are converging too. If your AI stack looks like everyone else’s, it gives you the same advantage as everyone else. Which is no advantage at all.

It’s no longer about which tool to use. It’s which systems to build.

A founder scaling a global AI marketplace built multiple custom voice agents using ElevenLabs. One onboards over a thousand customers a month, 65% of all interactions. A second makes one thousand outbound sales calls daily. Within two months, they outperformed every team member except the founder. These aren’t off-the-shelf products, they’re proprietary systems trained on his business, his data, his customers. Every interaction makes them smarter.

“We tear up ideas, poke them,” said a veteran at one of the world’s leading AI platforms, describing how his agent teams work. Confidence scoring at every stage. Humans plugged in at every threshold. The system isn’t autonomous; it’s adversarial by design.

A creative technologist took it further: three different LLMs critiquing each other’s code before any human sees the output. “By themselves, they’re not good enough.” He built a cultural monitoring engine on the same principle, scraping sport, social media, and internet culture, delivering a daily Slack digest so analysts spend their time thinking, not searching.

Then there’s the CEO who turned Claude Projects into a hiring screener, a sales coach, and a deal copilot. “A second brain that is not biased.” She makes every final call. The system doesn’t replace her judgment, it sharpens it. And competitors can’t reverse-engineer it by buying the same tools.

The gap isn’t between companies that use AI and those that don’t. It’s between those that rent AI tools and those that own AI systems. The first group gets efficiency. The second gets the moat.

04

The Missing Layer

Every operator we spoke with described the same friction. Not a missing tool. A missing architecture. Right now, what’s filling that gap is you.

The tools work, they just don’t talk to each other. There is no shared context between apps, no continuity between sessions, and no awareness of what you were doing five minutes earlier in a different tool.

So you became the integration layer. Every time you copy context from one tool to another or re-brief AI on a decision already made, you’re doing the work the architecture should be doing. The most capable people we interviewed are spending real cognitive load on this infrastructure.

The founder of one of the fastest-growing AI companies is using a custom platform as “duct tape to hold over ten tools together”. A technical lead at a major cloud platform put it simply: the goal is an AI-native operating system, “because we’re still using Mac and Windows from the nineties.”

What operators are asking for is remarkably consistent: “A single layer that sits across everything”, “holds context”, “understands priorities”, and “acts proactively without being prompted”.

But the builders in our interviews aren’t waiting for it to arrive, they’re creating it themselves. Custom orchestration layers, tools stitched into proprietary workflows, context held together by force of will and engineering. The solutions aren’t elegant, but they compound, and that’s the point. The operators who control their own systems aren’t just moving faster on today’s work; they’re building the connective tissue that makes tomorrow’s work better.

The market has started to respond. Several of the operators we interviewed are already using products like Highlight to build context layers across their desktop, and open protocols like MCP are creating infrastructure that lets AI models reach into external tools and data for the first time. But these are early moves. What operators are actually describing is more fundamental: one surface, one context, one point of control that makes the architecture invisible. It doesn’t fully exist yet.

One quiet signal points to where it’s heading. Several operators told us that voice has become their default input mode. They don’t type prompts, they talk. Removing the friction of writing allows ideas to move closer to the speed of thought. This is a signal of what the missing layer actually looks like: not another app, but persistent intelligence you interact with as naturally as a conversation.

The companies that crack this won’t win by building a better point solution. They’ll win by making every point solution work together, with the human at the centre.

The Advantage Isn't Artificial

We set out to understand what the best operators are actually doing with AI. The pattern was clear.

The operators ahead of the curve know exactly where AI ends and they begin. They’ve condensed their stacks, built proprietary systems that compound with every interaction, and refused to automate the last 10% that only a human can do. That’s the Imprint.

As AI makes competent output ubiquitous, the advantage comes from what only a human can add: judgment, taste, conviction.

Every operator in this report is building something that didn’t exist two years ago. So are we. Atmosphere partners with founders scaling AI, health, and climate companies. If you’re navigating the same territory, we’d like to hear from you.

This report was researched, drafted, and produced using a custom AI system built across capture, transcription, analysis, and editing, with human judgment at every stage.

Created by Atmosphere

Atmosphere is a growth partner for companies at inflection points, from initial go-to-market to enterprise transformation. We’ve spent the last two decades at companies large and small. Google, Pinterest, Saatchi & Saatchi, our own startups, and over a dozen as growth partners. We exist so the most ambitious ideas in AI, health and climate scale the fastest.

Stunning article. Never heard the current state of affairs so well communicated. Thank you!